Your campaign report says marketing generated 1,000 MQLs. Salesforce shows fewer new leads than expected. Sales starts questioning attribution, ops starts checking field mappings, and someone suggests the sync “probably lagged”.

That’s usually not lag. It’s an integration problem that wasn’t tested well enough.

In B2B teams running Salesforce and HubSpot together, integration software testing protects revenue far more than it protects code. It keeps lifecycle stages aligned, stops duplicate records from polluting routing logic, and prevents small API failures from turning into bad forecasts. In Canadian environments, that work also has a compliance layer. Cross-border data handling, field-level consent logic, and PIPEDA-sensitive mappings raise the cost of getting “mostly working” wrong.

Why Your MarTech Integration Is Leaking Revenue

The most common failure pattern is quiet. A form fills in HubSpot. The contact syncs to Salesforce, but one required field is blank, a workflow fires in the wrong order, or a duplicate rule blocks record creation. Nothing crashes. No one gets paged. But your funnel data is now wrong.

That error spreads fast. Lead source reporting becomes unreliable. SDRs work incomplete records. Sales managers lose confidence in campaign attribution. Marketing starts defending numbers instead of improving performance.

In Canada, B2B startups face 22% pipeline leakage from untested integrations, and test-first integration yields 3.2x faster GTM ROI than big-bang approaches. At the same time, only 18% track defect escape rates tied to attribution accuracy, according to Tweag’s integration testing analysis cited in the brief. That combination is familiar in RevOps. Teams feel the business pain, but they often don’t measure the technical cause.

Where the leakage usually starts

A Salesforce and HubSpot stack leaks revenue in predictable places:

- Lead creation gaps: A HubSpot contact qualifies, but Salesforce never receives a lead because validation rules reject the payload.

- Lifecycle drift: HubSpot says MQL, Salesforce says working lead, and reporting logic treats them as different stages.

- Attribution corruption: Campaign member status, original source, or UTM fields don’t sync cleanly, so revenue reporting stops matching reality.

- Routing failures: Territory or owner assignment depends on fields that arrive late, arrive blank, or arrive in the wrong format.

Practical rule: If sales and marketing disagree on the number, trust the process less, not the people.

Teams often frame this as a platform issue. It rarely is. It’s usually an orchestration issue. Salesforce and HubSpot can both do their jobs, but the handoff between them hasn’t been tested under realistic conditions.

That’s why I treat integration testing as part of revenue governance. If the sync decides who gets followed up, how fast they get contacted, and what revenue gets attributed back to source, then testing belongs in the same conversation as pipeline hygiene and forecast quality.

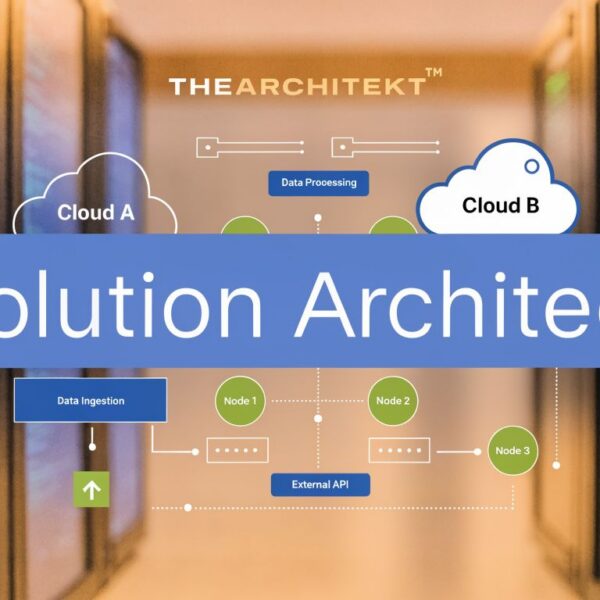

If you’re sorting out the basics first, a clear primer on platform integration strategy helps align the business workflow before you touch test scripts. And because integration quality also depends on how safely your apps connect and expose data, this SaaS security checkup is a useful companion read when reviewing connected systems.

Developing Your MarTech Integration Test Strategy

Ad hoc testing fails for the same reason ad hoc RevOps fails. It reacts to symptoms instead of controlling risk. A few manual spot checks before launch might catch obvious breaks, but they won’t protect a lead-to-revenue process that spans forms, enrichment, scoring, routing, CRM creation, and reporting.

A formal strategy pays off. Integration testing adoption among Toronto-based B2B firms increased by 65% from 2019 to 2022, correlating with a 42% drop in post-release defects. Mid-market companies also reported that 78% of integration failures were caught pre-deployment in the IBM-cited report from the brief. That matters because post-release defects in RevOps aren’t abstract bugs. They show up as missed follow-up, duplicate touches, broken dashboards, and bad executive decisions.

Start with business-critical flows

Not every integration deserves the same level of scrutiny. Test the flows that change revenue outcomes first.

For most Salesforce and HubSpot environments, that means:

MQL to sales handoff

A qualified HubSpot contact should create or update the correct Salesforce record, preserve source data, and trigger the right assignment path.Campaign attribution sync

Source, medium, campaign, content, and lifecycle timestamps need to survive the trip between systems without being overwritten or normalised badly.Lifecycle and status changes

If sales disqualifies, converts, or re-engages a lead in Salesforce, downstream automation in HubSpot needs to respond correctly.Lead-to-account matching

Duplicate prevention, contact association, and account ownership logic need testing whenever enrichment or routing rules are involved.

Choose the right integration approach

The testing method should reflect the complexity of your stack, not the preference of whoever writes the scripts.

A practical comparison:

| Approach | Where it works | Where it breaks down | RevOps fit |

|---|---|---|---|

| Big-bang | Small, simple integrations | Hard to isolate failures | Weak for active Salesforce-HubSpot stacks |

| Incremental | Stable module-by-module releases | Can be slower to organise | Strong when teams release in phases |

| Hybrid sandwich | Mixed dependencies and fast-moving systems | Needs planning and environment discipline | Often the best choice for MarTech integrations |

The hybrid path is usually the most resilient in practice. You test lower-level services and mappings in isolation, then test upper-level workflows with stubs or mocks where needed, then validate end to end.

A good strategy doesn’t ask, “Did the sync run?” It asks, “What revenue process breaks if this sync behaves incorrectly?”

Define pass criteria before writing tests

Most weak test programmes share one problem. Nobody agreed on what “working” means.

Use explicit pass criteria such as:

- Data integrity: Required fields arrive in the right format and in the right destination field.

- Timing: Critical syncs complete within an acceptable operational window.

- Automation safety: No workflow fires twice, and no lead is routed without the required context.

- Reporting trust: Attribution and lifecycle data remain usable in dashboards after sync.

Without those conditions, teams mistake technical connectivity for business readiness.

Setting Up Your Integration Testing Environment

A reliable test suite starts with a reliable environment. If the environment is unstable, your team wastes time chasing false alarms, rerunning scripts, and debating whether a failure is real. That’s expensive in any stack. In Salesforce and HubSpot, it’s worse because environment drift easily hides mapping issues, permission conflicts, and automation side effects.

For Canadian teams, the environment question also includes data governance. Generic guides often miss the regional issue: cross-border data flows between US-based Salesforce or HubSpot and Canadian servers can lead to 28% higher failure rates, and only 15% of Canadian mid-market companies test API payloads for PIPEDA-compliant field mappings, based on the Art of Testing reference cited in the brief. If you don’t model those constraints in test, production becomes the first real compliance review.

Build the environment to answer real questions

Use the environment to validate behaviour, not just connectivity.

A practical setup usually includes:

Salesforce sandbox selection:

Use a Developer or Developer Pro sandbox for field mapping, validation rules, and Apex-triggered integration logic. Use a Partial Copy sandbox when testing realistic object relationships, automation dependencies, and reporting behaviours. The main point is control. You need a place where a failed sync doesn’t contaminate production records.HubSpot test account or developer setup:

Keep test workflows, lists, forms, and custom properties separate from production. If your team reuses live assets for convenience, you’ll eventually test against changing logic and call it a “flaky integration”.Dedicated middleware or connector config:

Whether you use native sync, custom API work, or middleware, create isolated credentials and endpoints for test execution so results stay repeatable.

Use test data that matches your process

Weak test data creates false confidence. If every sample lead looks perfect, your integration won’t be ready for production.

Include records that reflect the mess of real B2B ops:

- Missing values: Incomplete company names, absent phone numbers, blank campaign fields

- Conflicting values: Different lifecycle stages, region values, owner data, consent flags

- Duplicate patterns: Same email, different email on the same company, merged account histories

- Edge-case payloads: Long strings, special characters, unexpected nulls, outdated picklist values

Canadian teams should treat field-level privacy and consent values as first-class test data, not as documentation notes.

For PIPEDA-sensitive workflows, anonymise or synthesise data before loading it into test systems. Don’t copy production records casually, especially where consent, geographic data, or customer service history may travel across connected apps.

Isolate dependencies with mocking

A mature environment doesn’t depend on every external service being available every time you test. That’s where service virtualisation and API mocking help.

When a Salesforce-HubSpot flow depends on enrichment tools, custom webhooks, or external scoring inputs, mock the responses so you can validate logic in isolation. This is also where tools such as Clay can support table-based API mocking and controlled data simulation for GTM workflows. The value isn’t novelty. It’s consistency. Your team can test lead scoring, routing triggers, and payload structure without waiting for every upstream dependency.

A good environment should answer three questions clearly:

| Question | If the answer is no | Likely result |

|---|---|---|

| Can we run the same test twice and expect the same outcome? | Environment drift | False failures and low trust |

| Can we test privacy-sensitive mappings safely? | Weak data controls | Compliance exposure |

| Can we isolate one broken dependency? | No mocking or stubs | Slow diagnosis |

Core Test Cases for Salesforce and HubSpot

Once the environment is stable, the work becomes specific. Integration software testing should focus on the points where Salesforce and HubSpot disagree, overwrite each other, or fail without notification. Those are the moments that damage routing, attribution, and CRM trust.

A useful reference point comes from a 2023 MarTech project analysis. It found that 68% of initial integration failures stemmed from unmapped OAuth 2.0 token mismatches, and that enforcing schema validation pre-sync cut data corruption by 55%, according to the GeeksforGeeks-cited analysis in the brief. That tells you where to be disciplined. Authentication and payload structure deserve more attention than they usually receive.

If you’re mapping this against an actual deployment, this guide to Salesforce and HubSpot integration gives useful business context around the workflows these tests need to protect.

Four areas that deserve constant coverage

The baseline test suite should cover four categories.

Data mapping and validation

Many RevOps issues begin here. A field exists in both systems, but the allowed values, update rules, or business meaning don’t match.

Test cases should include:

- New record creation: A HubSpot MQL creates the correct Salesforce lead or contact, with expected values in source, owner, lifecycle, and consent-related fields.

- Bidirectional update logic: A sales status update in Salesforce changes the intended HubSpot property without overwriting fields marketing owns.

- Schema validation: Payloads must match expected formats before sync. This is especially important for picklists, dates, booleans, and nested objects in custom integrations.

- Duplicate handling: Existing Salesforce records should update when appropriate and avoid generating duplicate contacts or leads.

Triggers and automation logic

A field sync is only half the story. In most live stacks, that field starts another process.

Test whether:

- a HubSpot lifecycle change triggers the right Salesforce workflow

- a Salesforce owner assignment updates downstream nurture or suppression logic

- disqualification or recycling status prevents the wrong marketing automation from firing

- campaign membership changes preserve attribution rather than resetting it

If one field update can trigger three automations, test the sequence, not just the field value.

Authentication and security

Many teams test the happy path once, then assume auth is solved. It isn’t.

Validate:

- token refresh behaviour

- permission scopes for connected apps

- behaviour when credentials expire

- webhook verification where external services push data into the stack

- user-level access controls when sync logic depends on who can create, edit, or read records

This is also where adjacent integration patterns matter. If you’re reviewing AI-connected workflows, the Hubspot integration for Halo AI is a useful example of the kind of connected service architecture that should be tested for auth boundaries and data flow assumptions.

A practical test case checklist

| Test Category | Sample Test Case (HubSpot <> Salesforce) | Expected Result | Tools |

|---|---|---|---|

| Data mapping | HubSpot MQL creates Salesforce Lead with mapped source and lifecycle values | Correct record type, no blank required fields, no unexpected overwrite | Postman, sandbox records, connector logs |

| Validation | HubSpot sends invalid picklist or null required field | Sync fails visibly, error is logged, no partial bad record persists | Postman Newman, schema validation |

| Automation | Salesforce owner assignment updates HubSpot owner or routing property | Ownership remains aligned and follow-up workflow fires once | HubSpot workflow history, Salesforce debug logs |

| Duplicate control | Existing Salesforce Contact receives HubSpot update | Existing record updates correctly, duplicate isn’t created | Salesforce duplicate rules, test datasets |

| Authentication | OAuth token expires during scheduled sync | Connection refreshes safely or fails with clear alerting | Connector logs, API test scripts |

| Performance | Burst of form submissions hits the sync queue | Records process in order, no silent drops, retries behave correctly | Cypress, queue monitoring, middleware logs |

Performance and rate-limit behaviour

Peak traffic exposes weak assumptions. A sync that works at low volume can fail when campaigns launch, imports run, or sales ops modifies assignment rules during the day.

Test high-load and negative scenarios deliberately. Include retries, malformed payloads, and out-of-order updates. The point isn’t to prove perfection. It’s to find where the integration degrades and whether it does so safely.

Automating Tests with a CI/CD Pipeline

Manual checks are useful for diagnosis, but they don’t scale with an active RevOps stack. Salesforce admins update flows. HubSpot admins change properties. Developers adjust middleware. Every change can break an existing integration path that no one intended to touch.

That’s where CI/CD changes the role of testing. Instead of becoming a release bottleneck, integration software testing becomes an automated gate that protects business workflows. In British Columbia, organisations using CI pipelines with automated integration tests achieved a 55% higher project success rate and reported 30 to 40% faster release cycles after adopting those tools, based on the DevDojo-cited report in the brief.

What should run automatically

A useful pipeline doesn’t try to automate everything at once. It automates the checks that break often and matter most.

That usually means:

- API contract tests whenever payload shape, field mapping, or auth logic changes

- End-to-end tests for lead creation, qualification, routing, and sync confirmation

- Regression suites after fixes to ensure one repaired path didn’t break another

- Smoke tests on core integrations before deployment to shared environments

Postman and Newman work well for API-level checks. Cypress is practical for browser-based flows where form submissions, embedded scripts, or visible state changes need validation. Jenkins and GitHub Actions are both suitable for orchestrating those runs when code, config, or integration assets change.

The CI/CD pattern that works for RevOps teams

The most effective pattern is simple:

Trigger tests on change

Run relevant integration checks when a pull request, configuration change, or deployment candidate is created.Fail fast on core breakpoints

Stop the release if auth, field mapping, or critical workflow tests fail.Promote only tested changes

Don’t let “small” mapping or automation updates bypass the same discipline as code.Publish readable results

Ops and business owners should be able to see what failed without reading raw logs all afternoon.

Automated testing is valuable when it shortens the distance between a change and a trustworthy answer.

For teams tightening release discipline, CloudCops’ CI CD pipelines guide is a solid operational reference. If you’re sorting out the terminology and workflow boundaries inside your own stack, this comparison of continuous integration vs continuous deployment helps frame where test automation belongs.

The win isn’t just speed. It’s that the ops team spends less time rerunning the same scenarios manually and more time improving scoring logic, routing design, and reporting quality.

Monitoring, Rollbacks, and Measuring Success

A deployment that passes test is not the finish line. Salesforce and HubSpot integrations change after launch because users change behaviour, admins change automation, and vendors update APIs. If you don’t monitor those shifts, your first signal of failure will come from sales asking why records look wrong again.

A 2024 Canadian report found that 62% of production outages traced back to untested API interactions, costing an average of CA$1.2M per incident. The same report found that monitoring with defined uptime SLAs and regression suites cut defect escape rates by 64%, according to the ThinkSys-cited methodology reference in the brief. That’s the business case for post-launch discipline.

Monitor the signals that matter to revenue

System uptime matters, but it’s not enough. An integration can stay “up” while sending bad data.

Track a mix of technical and RevOps indicators:

- Sync failures and retries: Look for repeated API errors, queue backlogs, and partial record updates.

- Data discrepancies: Compare lifecycle stage, owner, source, and status values across systems.

- Latency on critical flows: Watch for delays between form submission, CRM creation, and assignment.

- Business exceptions: Flag contacts without owners, leads without source, or opportunities disconnected from campaign context.

A dashboard should tell both ops and leadership whether the integration is preserving pipeline quality, not just whether the connector is online.

Plan your rollback before you need it

A rollback plan should be written before deployment, not invented during an incident.

At minimum, define:

| Rollback element | What it should answer |

|---|---|

| Trigger | What specific failure condition requires rollback |

| Owner | Who can approve and execute it |

| Scope | Which automations, connectors, or sync jobs will be paused |

| Recovery | How you restore the last known good state |

| Validation | How you confirm records and reports are usable again |

In practice, rollback may mean disabling a sync, reverting a middleware release, restoring a prior mapping set, or pausing a workflow that is mutating records incorrectly. The best rollback plans minimise blast radius first. Cleanup comes after stability.

A rollback plan is part of integration design, not an emergency appendix.

Measure whether testing is actually working

The right KPI set is narrower than many teams think. I care less about how many tests exist and more about whether the programme improves operational confidence.

Review these outcomes regularly:

- Lower defect escape rate in production

- Less manual data cleanup by marketing ops and sales ops

- Better attribution consistency across dashboards and CRM reports

- Faster lead handling because routing data arrives cleanly

- Higher sales trust in CRM record quality

If those aren’t improving, the test suite may be technically active but strategically weak.

If your Salesforce and HubSpot stack feels fragile, MarTech Do helps B2B teams audit integrations, tighten RevOps processes, and build testing discipline around the workflows that drive pipeline. Whether you need a one-time system review or ongoing support, the goal is the same. Cleaner data, safer releases, and a revenue engine your teams can trust.